What is agentic AI — and why the market is moving in this direction

From Chatbots to Autonomous Workflows: Engineering Trust in Agentic Loops.

Iago Mussel

CEO & Founder

For the past three years, AI’s main job was to respond.

You asked a question. It answered. You gave it a document. It summarized. You asked it to write code. It wrote code. The model was a very smart autocomplete — genuinely powerful, but fundamentally passive.

Something changed. And it’s not a new product category or a new model announcement. It’s a different relationship between AI and work.

What “agentic” actually means

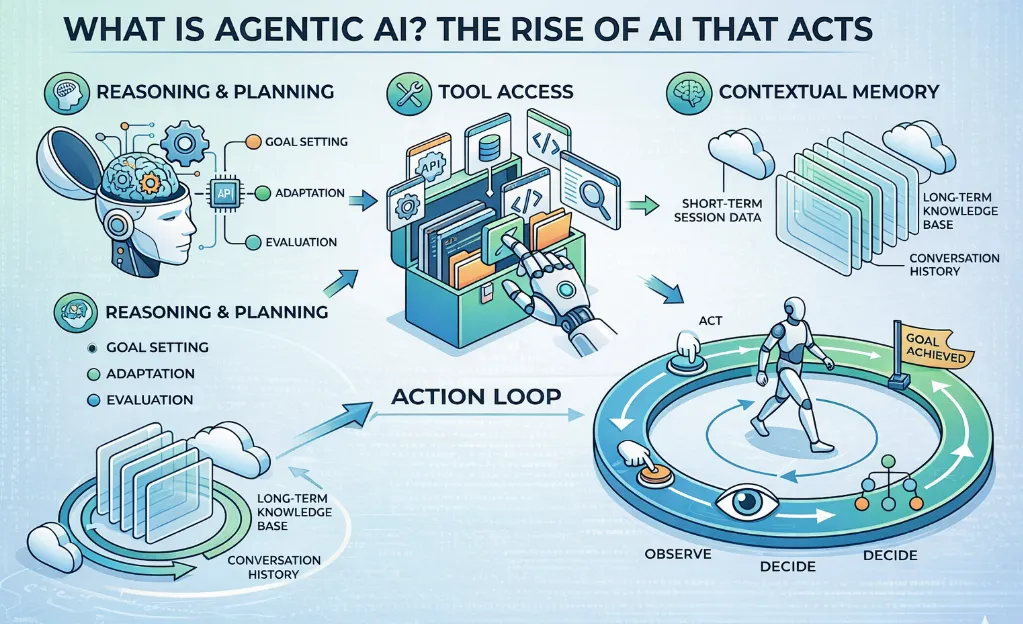

An agentic AI system pursues a goal across multiple steps. It uses tools. It maintains context. It adapts based on what it discovers along the way.

Five things make it work:

A model that reasons. Not just predicting the next token — actually evaluating options, selecting actions, and deciding what to do when the first attempt doesn’t work.

Tools and World Models. APIs, code execution, web search, database access, file systems. Moreover, with the rise of Large Action Models (LAMs), agents can increasingly “see” and navigate user interfaces directly, rather than just reasoning in text. The agent interacts with the world, it doesn’t just describe it.

Memory. Short-term context within a task. Optionally, longer-term memory that persists across sessions.

State Management. An agent isn’t just a loop; it’s a state machine. It needs the ability to pause for human approval, hydrate its context from a database, and resume execution without losing its place.

A loop. The agent acts, observes the result, decides the next step, and repeats. It doesn’t stop after one response.

This is fundamentally different from a chatbot that calls a function when you say the magic words. An agent is running a plan. A chatbot is following a script.

Why this is happening now and not three years ago

A few massive shifts converged between 2023 and 2025 that made agents actually viable in production.

Context windows got big enough. Earlier models held a few pages of context. Current models hold hundreds — enough to reason across a full codebase, a complete document set, a long conversation history. That matters because multi-step tasks need that context to make coherent decisions.

Tool use became reliable. Early agents were unreliable in a specific way: the model would pick the wrong tool, misinterpret the result, and confidently proceed in the wrong direction. That problem is largely solved. The models are much better at tool selection and result interpretation.

Inference got cheap—and routing got smart. Running an agent means many model calls per task. When inference was expensive, the economics only worked for narrow, high-value use cases. Now, it’s not just that inference is cheaper; it’s that routing has become sophisticated. We use small, blisteringly fast models for the routine loop operations, and “reserve” the frontier models for high-stakes reasoning steps.

The idea was always sound. The infrastructure just caught up.

What actually changes

The biggest change is the scope of what AI can handle without a human orchestrating every step.

Before agents, AI assisted with discrete tasks. Write this email. Summarize this document. Explain this error. One prompt, one output, done.

With agents, AI handles processes. Research this prospect, check CRM history, draft an outreach sequence, and log the activity. Review this PR, run the tests, flag security patterns, and leave structured comments. Tasks that used to require a human to coordinate each step.

The output isn’t a single artifact anymore. It’s a completed workflow.

The Agentic UX Shift

Because the output is a workflow, the interface is changing too. We’re moving away from the “Chat Box” as the primary UX. If an agent is truly acting autonomously, the user shouldn’t be forced to watch a terminal scroll or a chat bubble stream. Instead, they should be looking at a “Diff” of the world state—what the system looked like before the agent ran, and what it proposes to change.

That changes the economics of automation. A lot of processes that weren’t worth automating — because they required too much human judgment to orchestrate — are now viable candidates.

The failure modes you need to plan for

Agentic systems fail in ways that simple chatbots don’t. Worth knowing before you build.

Cascading errors. A wrong decision in step 2 compounds by step 10. The agent builds on a faulty premise and gets very confident in the wrong direction. This is where agentic observability and guardrail middleware become critical—you need a layer that intercepts a bad thought before it becomes an expensive API call.

Token exhaustion. Without explicit termination conditions, agents can loop. An unbounded loop doesn’t just waste time; it can eat your entire token budget in 30 seconds. Set hard limits on steps, API calls, and wall-clock time.

Silent actions. An agent that can write to a database, send an email, or modify a file needs a complete audit trail. Every tool call, every decision — logged. “The agent decided” is not a defensible answer when something goes wrong.

Scope creep. Agents given broad tool access will sometimes use tools in creative ways you didn’t intend. Scope the permissions tightly. Least privilege applies to agents exactly as it does to humans.

These aren’t reasons to avoid agentic systems. They’re the reasons to build them carefully rather than just copying a demo from a conference talk.

Where this is actually landing

The hype peaked in 2024. The practical cycle is happening now.

Teams that shipped experimental agents in 2024 spent 2025 learning what actually worked in production versus what worked in a demo. The patterns that survived are consistent:

- Narrow scope, clear goal, well-defined tools

- Human review at high-stakes decision points before irreversible actions (viewing the “Diff”)

- Complete logging and the ability to replay any run

- Gradual autonomy expansion — prove the agent on low-stakes tasks first, then widen the scope

The demos sold “full autonomy.” What’s actually working in production is “supervised autonomy.” The agent does the work. A human reviews before it matters.

That’s not a failure of the technology. That’s how you build trust in a new kind of system. The autonomy expands as the trust builds.

I work with teams building production systems and developer tooling. If this topic resonates, you can find more of my work at https://huntermussel.com.